The Assumption Nobody Wrote Down: Why Single-Number Plans Break

Most planning failures get diagnosed as forecast failures. The number was wrong. The model missed something. Next time we'll use better data or a more sophisticated method.

This diagnosis feels satisfying because it points to a fixable technical problem. It is also usually wrong.

The forecast is rarely where plans break. Plans break on the assumptions nobody documented: the ones that felt too obvious to write down, the ones that turned out not to be obvious at all.

What the handoff was actually doing

Every forecast that travels from the person who built it to the person who acts on it crosses a boundary. That boundary used to do something most of us didn't notice until it was gone.

When the forecaster handed off to the planner, the number had to be explained. Not just delivered. The planner asked questions and the forecaster answered them. In that exchange, assumptions living silently inside the model had to be articulated out loud. The handoff created friction, and that friction was a forcing function.

Remove the boundary and the articulation stops. The assumptions keep traveling, but now they do it silently.

I know what a well-designed version of this looks like because I worked inside one. The process ran in layers: forecasters reviewed assumptions among themselves first, then the planning team and manager joined, then the stakeholders who owned operations reviewed it together. Each handoff was a moment where an assumption that felt obvious to the person who made it had to survive contact with someone who hadn't made it. Mistakes were caught early. There was time to get answers before plans were committed. It was imperfect and sometimes slow. It was also the reason invisible assumptions rarely stayed invisible for long.

In the role where I was the forecaster, the planner, and the source of intraday patterns simultaneously, none of those checkpoints existed. I hadn't noticed their absence until I felt the consequences.

When every layer is right and the plan is still wrong

I was a seasoned forecaster when this happened. I owned the forecast and the plan. There was no handoff because there was no second person. Whatever the number assumed, it carried forward through the entire planning process unchallenged.

My job was to produce staffing plans for a customer support operation at two levels: weekly team requirements and intraday scheduling requirements in 30-minute intervals. The weekly plan sets the broad shape. The intraday plan is what agents actually work from, hour by hour.

Intraday plans are built weeks or months ahead, not days. The lead time exists so that everyone downstream, including external support partners, has enough time to act on them. That lead time also means the patterns used to build the plan are older than they appear. When I built September's intraday profiles, I was working from June and early July.

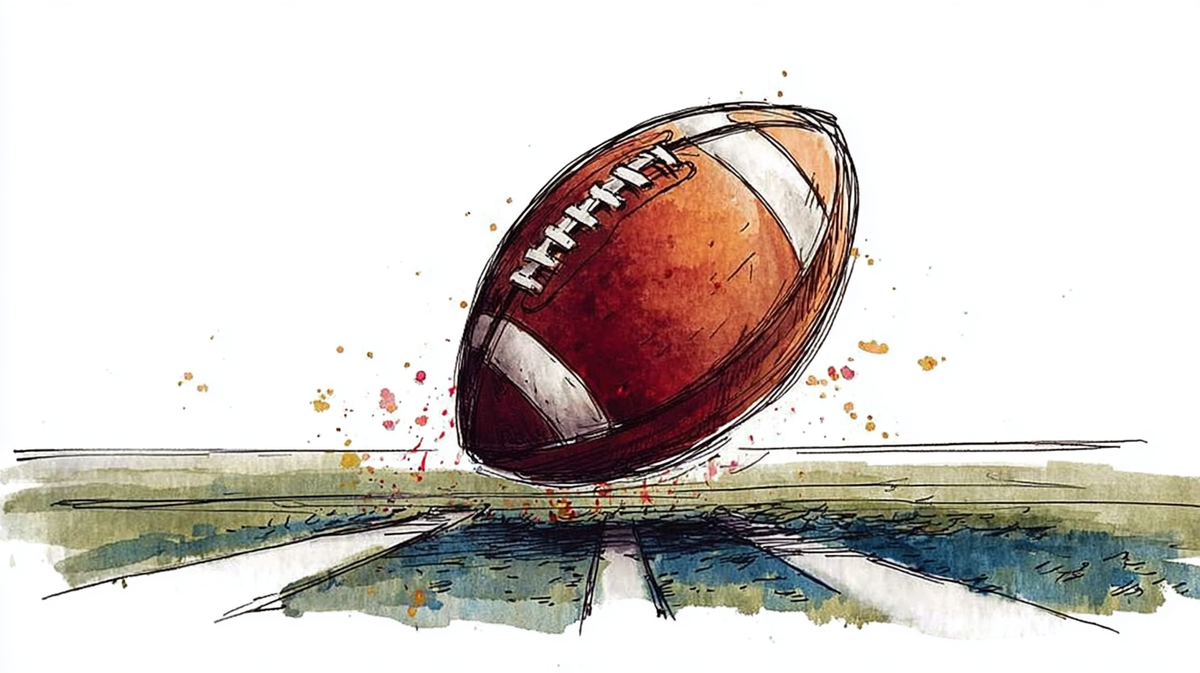

There is no NFL in June and July.

The NFL season starts in September. On Sundays during the season, contact patterns shift in ways that have no analog in summer months. Volume surges in specific windows tied to game schedules. The intraday profile that was correct for a summer Sunday was wrong for a fall Sunday in ways that didn't show up in the weekly totals at all. The weekly numbers looked fine. The shape of the day was wrong.

There were two structural problems underneath this. Most organizations store daily data for years, but 30-minute interval data is typically retained for only a few months. The historical intraday profiles needed to detect a pattern like NFL Sundays weren't available even if I had known to look for them. And I hadn't grown up watching American football. I had no cultural frame that would have prompted the question. Without the question, the assumption that summer profiles were adequate templates for fall Sundays traveled forward through a correctly built model into two months of committed plans.

By the second weekend of September, it was clear this was a pattern, not noise. People were asking questions I didn't have good answers to.

When I traced it back, every individual layer of the forecast was defensible. The weekly model was right. The intraday templating followed standard practice. The failure lived in the seam between the methodology and a seasonal driver it had no mechanism to detect, that the data infrastructure had no history to surface, and that my own cultural frame had no reason to flag.

The consequence didn't stay operational. What I had to correct wasn't live staffing alone. It was two months of committed plans, revised under scrutiny, with the confidence of the people who depended on my work visibly shaken. That kind of failure does something harder to correct than a staffing plan. It introduces self-doubt that runs deeper than the specific mistake. Not just: did I miss something here? But: do I actually understand what this work requires of me?

What it requires, I came to understand, is more than a well-calibrated model. A good forecast is not just the result of a good calculation. It is a representation of the risk you are assuming by building a plan on a single version of the future. When the assumptions behind that version are invisible, the risk is invisible too. The organization commits to a plan without knowing what it is betting on. That is not a forecasting problem. It is a planning problem.

This is the same problem I wrote about in my first article from a different angle: the danger of treating uncertainty as something to be hidden inside a number rather than something to be represented honestly so that decisions can be made with open eyes.

What I had missed was the forcing function. I was the whole pipeline and there was no boundary for the assumption to surface at. No peer on the other side of the handoff to ask the question I didn't know to ask myself.

The structure changed before anyone said so

This pattern is becoming more common, not less.

Organizations have been flattening for years. AI tools have accelerated the compression. The peer relationships that used to exist between forecasters and planners, between analysts and budget preparers, between the person who built the number and the person who acted on it, are being consolidated into single roles justified by efficiency and enabled by tooling that makes one person genuinely capable of doing what two used to do.

The efficiency case is real. The throughput goes up. The coordination overhead goes down.

What doesn't get named in that transaction is what gets removed.

The peer handoff was not just a coordination mechanism. It was the moment where a number built inside one person's assumptions had to survive contact with a second person's questions. That contact was imperfect and sometimes adversarial and sometimes slow. It was also the last natural checkpoint where an assumption that felt like a background condition had to become a foreground statement.

Remove the peer and the checkpoint disappears. The assumptions keep traveling without anyone asking.

The practitioner carrying the consolidated role inherits both the efficiency gain and the exposure. In most organizations, when an invisible assumption does its damage, they absorb the consequence alone. The culture that removed the structural check does not typically acknowledge the failure it made possible.

Rebuilding the forcing function deliberately

The fix is not a better model. The multi-pass seasonality problem is a real structural limitation of classical time-series methods, and better tooling will eventually address parts of it. The data retention problem is a real infrastructure gap, and organizations that invest in storing granular historical data will have more to work with. These are worth solving.

But neither solves the assumption documentation problem. A more sophisticated model built on the same undocumented assumptions will fail for the same reasons. More historical data accessed by one person with no peer to ask questions will surface patterns that person knows to look for and miss the ones they don't.

The deeper issue is this: forecasting principles are more or less universal. The numbers that feed them are not. You need to understand the industry you are forecasting for, the customers whose behavior drives your signal, the institutional calendar that shapes demand in ways no historical dataset will show you. That knowledge is not acquired in a quarter or a year. I worked at Intuit for more than five years and every tax season taught me something new. Industry knowledge is itself a form of assumption documentation, and it may be the most pervasive form of all, because it assumes you understand the context your models are operating in.

The organization has no obligation to build this knowledge for you. It can surface information proactively, and when it does that is valuable. But the discipline of asking belongs to the craft. After the NFL failure I built two practices that became permanent parts of how I worked.

The first was an explicit calendar of events that could change daily or intraday patterns: holidays, sporting events, sales campaigns, retailer-driven holidays. In retail these evolve year to year. Amazon Prime Days, Target Circle Week, Walmart Days are events with no confirmed date until weeks before they happen. I maintained that calendar consistently, surfaced it during every stakeholder review, and passed it to the next forecaster when I left.

The second was a structured stakeholder interview two weeks before forecast work began. Every month I hosted interviews with each stakeholder and asked about coming changes or new initiatives that could impact demand. Even if you don't get what you need, the act of asking makes the invisible visible.

These practices were a direct consequence of the NFL failure. They were also the forcing function I rebuilt in the absence of a peer.

What they taught me to ask, before any forecast left my desk, were three questions I now consider part of the minimum discipline of the role.

What is on the horizon that could change daily or intraday patterns? A calendar review, not a model review. Looking specifically for events that historical data cannot see because they are new, because they fall outside the training window, or because the organization hasn't shared them yet.

For each event identified, ask what you actually know: historical data if it exists, estimates from other teams, stakeholder input. This question forces a distinction between what you can model, what you can approximate, and what you are genuinely operating blind on. The third category is the one that requires explicit documentation.

Does this event actually move the needle? Not everything on the calendar matters equally. Some events that look significant turn out to have no measurable effect on the forecast. Some that look minor turn out to be load-bearing. The sensitivity test is what separates an assumption worth carrying forward from noise.

Who should be asking these questions? If you are creating or collaborating in creating a forecast, you should. If you are the planner or manager who will use the forecast to build a plan, you should ask the forecaster whether she has considered them. And if you are wearing both hats, you need to ask yourself before you start planning.

The season always starts

The assumption nobody wrote down didn't stay invisible because I wasn't careful enough. I was careful. I was experienced. Every layer of the forecast was defensible by the standards of the methodology.

It stayed invisible because the structure that used to surface it was gone. There was no boundary for it to cross. No friction to force it into words. No peer to ask the question I didn't know to ask myself.

That is a different problem than inexperience. And it has a different fix.

Organizations consolidating roles are making a reasonable efficiency tradeoff. But they are also making an assumption nobody is writing down: that the person carrying the consolidated role will catch what the peer relationship used to catch for them.

They won't. Not always. Not because they aren't capable enough. Because that is not how assumptions work. They stay invisible until the condition they couldn't survive arrives.

The NFL season always starts. The question is whether your planning process is still in a position to notice before it does.